The missing ingredient in successful AI transformation isn’t just technology or strategy. It’s how your teams experiment, learn, and manage disagreement, a critical factor in driving innovation and performance with artificial intelligence.

In conversations with leaders across multiple continents, from startup founders in Southeast Asia to enterprise CIOs in Europe, I keep hearing the same frustration: “We have the talent, we have the tools, and we have the strategy. So why aren’t we moving faster on AI?”

The answer is rarely what they expect. It’s not a technology gap, and it’s not a talent gap. It’s a team dynamics gap, and it shows up in how (or whether) people on those teams actually challenge each other’s thinking.

The Shift: AI Demands A Different Kind Of Team Intelligence

The setting of work has fundamentally changed. According to the World Economic Forum, nearly half of workers’ core skills will be disrupted within four years. By 2030, 86% of companies will see jobs, processes, or roles change due to AI. This is not a trend on the horizon; it is happening now.

But here is what most leaders miss: AI adoption does not operate in a domain of clear cause-and-effect. There is no established playbook, and leaders cannot predict the future through analyzing the past, no matter how hard they try. They are navigating what complexity researchers call a “complex domain”, where answers emerge only as you move forward. That demands experimentation, learning, and immediate adaptation from teams.

And that is where things break down. Research from MIT found that the highest-performing teams are not the ones with the highest average IQ or the smartest individual in the room. What actually determines collective intelligence is the average social sensitivity of group members, the extent to which conversations are not dominated by a few voices, and the percentage of women in the group (women tend to have higher social sensitivity). In other words, it is the emotional and interpersonal capabilities - not the cognitive ones - that determine whether a team can innovate well in conditions of uncertainty.

Have you ever seen a group of highly intelligent people get very little done because there was an abundance of knowledge and ego but a shortage of humility and listening skills? You get the idea. Most of the leaders I work with understand this concept, yet their teams continue to underperform. The reason? Those teams don’t know how to disagree well.

The Gap: Most Organizations Are Too Nice To Innovate

Most people think psychological safety means creating an environment where everyone feels comfortable. That is both erroneous and potentially dangerous. Psychological safety, as Harvard professor Amy Edmondson defines it, is a learning culture: a place where people can ask questions, admit mistakes, challenge ideas, and speak up when they disagree, all while maintaining mutual respect.

The goal is not comfort; it is learning and growth. That requires a healthy dose of something I call “generative disagreement.” It’s a type of cognitive friction that produces better ideas and sounder decisions.

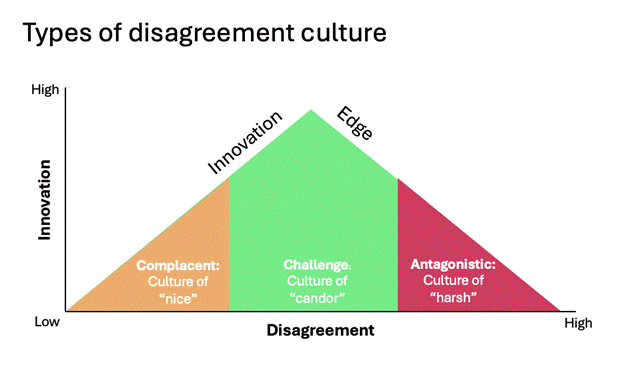

When it comes to disagreement, organizations operate along a spectrum. On the left side, there is too little, which creates complacency (culture of “nice”). On the right side, there is too much, which creates antagonism (a culture of “harsh”). In the middle is an optimal level of disagreement that creates a healthy challenge (a culture of “candor”). That middle zone is where teams maximize innovation and creativity.

In my experience working with thousands of leaders globally, about 70% of organizations land on the left side of this spectrum. They are too nice. They don’t lack opinions; they just don’t want to risk angering or offending their colleagues. So they avoid conflict entirely rather than developing the skills to do it productively. The result is predictable: teams wind up running with suboptimal ideas (usually the leader’s), resorting to passive-aggressive maneuvering, or engaging in wars of attrition where the last person standing wins. These are all poor ways to arrive at a decision, and the cost becomes especially high when the stakes involve how the organization adopts AI.

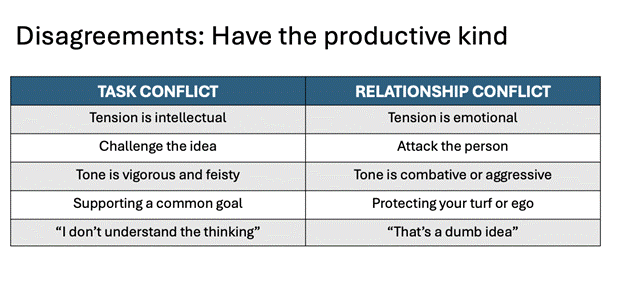

To help leaders promote more generative disagreement, I teach them to distinguish between two types of conflict. The first is task conflict, which involves disagreement about ideas, strategies, and approaches. The second is relationship conflict, where things become personal. Task conflict improves outcomes. Relationship conflict does the opposite. Research has found that the highest-performing teams maximize task conflict while limiting relationship conflict. But most organizations have not invested in these skills. They can’t tell the difference, so they wind up suppressing both.

This is the real gap in AI transformation. Many organizations are pouring resources into technology, strategy, and change management, but few are investing in the team-level emotional and interpersonal skills that determine whether those investments actually drive innovation.

From Theory To Practice: What It Looks Like When Leaders Get This Right

I have worked with C-level executives at multiple companies who were frustrated that their leadership teams would not push back on ideas. These leaders wanted to debate. They wanted their people to challenge ideas and surface risks before decisions were made. But meeting after meeting, they got smiling faces and polite nods.

The problem was never that team members lacked different viewpoints. It was that the environment had not been designed to make disagreement expected, supported, and rewarded.

In each case, we worked together to build certain mechanisms. The leaders began modeling intellectual humility, openly asking their teams to pressure-test their thinking. They would say things like, “What am I missing? I am sure I am not the smartest person in this room,” and, “Poke some holes in my ideas.” When someone spoke up with a dissenting view, the leader would publicly commend it: “That is exactly the kind of pushback we need more of.” The signal was unmistakable: disagreement wasn't only tolerated, it was expected and appreciated.

These shifts did not happen overnight. But over time, consistency increased, and decision quality improved. Teams were identifying risks earlier, surfacing better alternatives, and moving faster on initiatives that previously would have been undermined by silent detractors.

What Comes Next: Building Teams That Can Think Together Under Pressure

The organizations that will lead in AI adoption are not necessarily the ones with the most technical talent or the largest budgets. They will be the ones that figure out how to unlock what Harvard digital innovation expert Linda Hill calls “collective genius”—a team’s ability to think, create, and iterate together in ways that are smarter than any individual.

That requires three things from leaders.

First, build trust through positive relationships. Research consistently shows that the most important facet of trust is not competence or consistency. It is the belief that the other person is genuinely looking out for your interests. When employees trust that their leaders are helping them thrive through AI transformation (not just driving productivity), they engage differently.

Second, create room for intelligent failure. Innovation requires experimentation, and, by definition, it means many things won’t work. Leaders who punish failures of any type shut down the very learning loops that AI adoption demands. The most effective organizations treat early-stage AI initiatives as reversible experiments: try something novel (at the right scale, of course), learn from it, adjust, and iterate.

Third, make disagreement a team norm. This is not about encouraging conflict for its own sake. It is about building the skills and culture where people can respectfully challenge each other’s perspectives, knowing that the goal is a better outcome for everyone.

The leaders I have seen get this right share a common trait: they understand that AI transformation is not simply a cognitive process; it is also a social-emotional one. They expertly integrate their EQ with their IQ and possess what I call the EPIQ skill set. They see the anxiety, uncertainty, and excitement that come with this moment not as emotional factors to ignore or manage around but as the crux of the adaptability challenge. Leaders and teams that learn to channel these emotions into productive energy will be the ones that move fastest and farthest.

The good news is that these are learnable skills. The bad news is that most organizations are not learning them. Expertise in emotional intelligence and interpersonal relations is not a “nice-to-have.” They are mission-critical skills for every organization striving to move deliberately and confidently with AI transformation. And in a world where uncertainty is constant, and the pace of change is only accelerating, the gap between leaders and teams that possess EPIQ skills and those that do not will only grow wider.