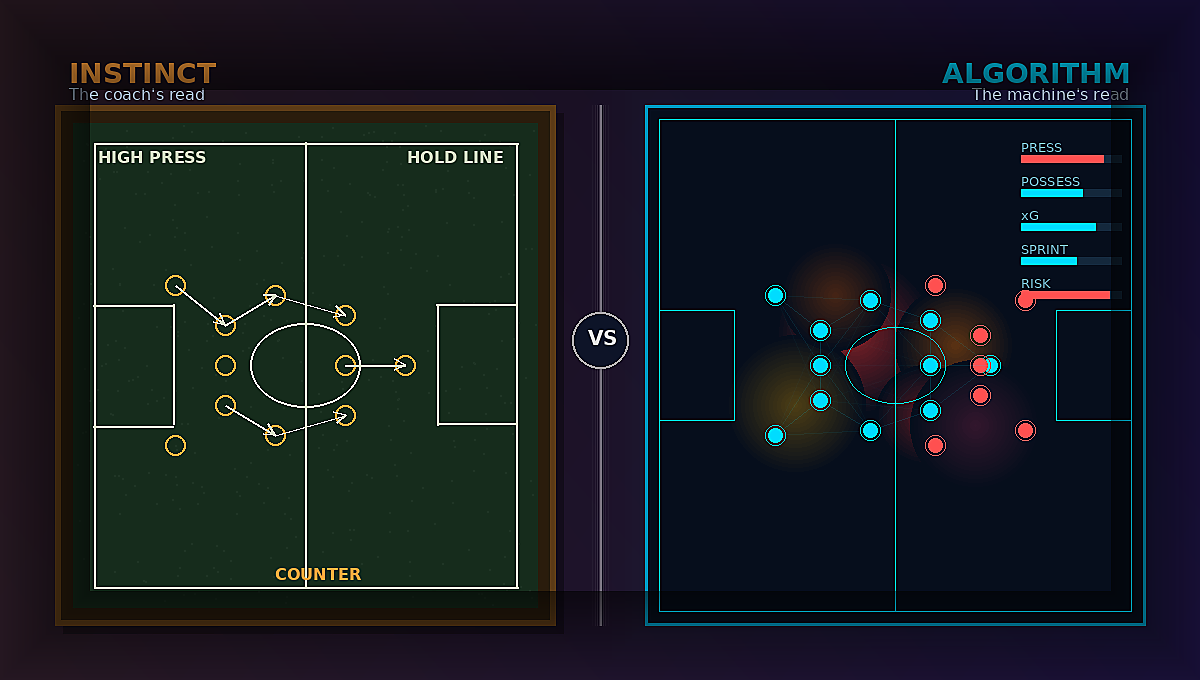

The data is smarter than ever. The decisions are harder than ever. And somewhere between instinct and algorithm, football (soccer) is trying to figure out who still gets to call the shot.

If you've spent any time in professional sport over the last 2-3 years, you've felt it.

Who gets to decide?

Is it the head coach with twenty years of pattern recognition embedded in his or her nervous system? Or the analyst running predictive models trained on tens of thousands of match events? Or the board demanding measurable return on investment? Or the algorithm that identifies relationships no human eye could possibly detect?

The fans know it too. They yell about VAR. They don't like plenty about the modern game. But most of them also quietly admit, parts of it have gotten way better.

THE TOOLS ARRIVED BEFORE THE TRUST DID

As AI systems become embedded across global football operations, from recruitment to load management to fan engagement, organizations are not simply adopting new tools. They are renegotiating trust. And that negotiation is uncomfortable.

For decades, football decisions were made under constraint. Performance data had been fragmented. International communication and collaboration were slow. It still is in some ways. Intuition was a differentiator.

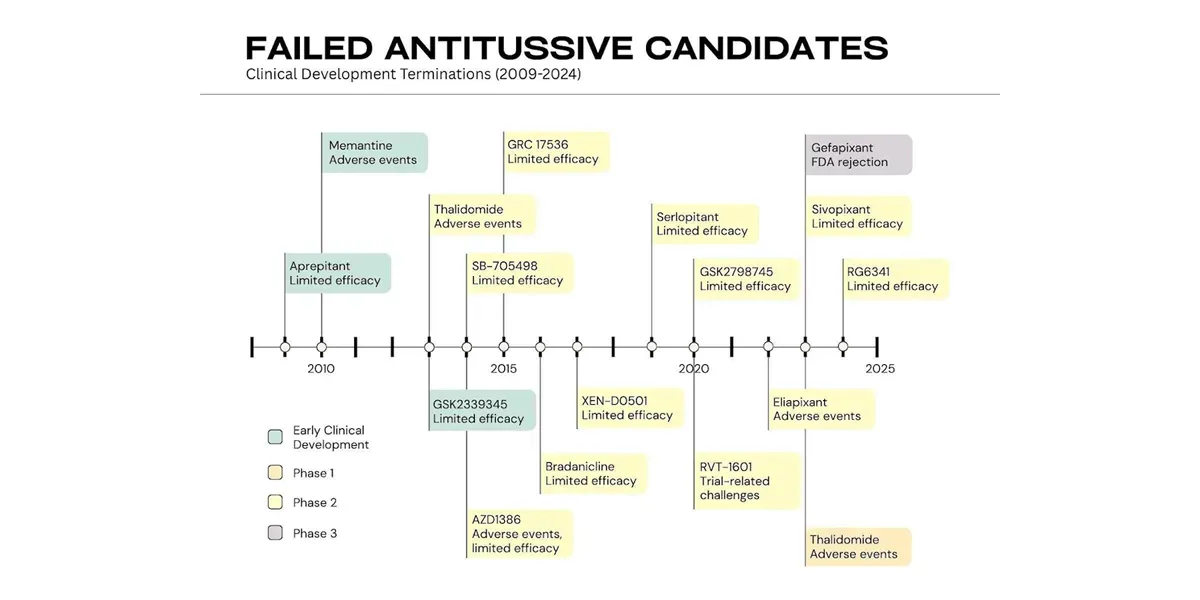

Today, those constraints have largely collapsed. Clubs at nearly every tier now have access to event data across leagues, computer vision tracking, predictive injury modeling, contract valuation simulations, and automated opponent analysis. What was once available only to elite federations is gradually accessible to mid-tier clubs and emerging markets.

This shift is global, and the promise of this evolution is simple and compelling: more data should imply fewer mistakes. As of 2025, three out of four professional sports teams globally rely on real-time AI-driven analytics for performance and strategy, and football leads all team sports in adoption rate.

THE HUMAN SYSTEM HASN'T CAUGHT UP

However, beneath that promise exists a deeper tension. We have optimized our ability to generate insight far faster than we have evolved our capacity to absorb it and possibly come to terms with its capabilities. The division between human expertise and machine capability is not purely technical. It is psychological, organizational, and cultural.

Artificial intelligence excels at recognizing patterns amid broad datasets. It can detect performance decline, predict fatigue risk, and flag underappreciated players in secondary leagues. Premier League clubs using AI-powered workload monitoring have reported injury rate reductions of over 20%. Yet football is not only a data problem. It is a meaning problem. A model may identify declining sprint metrics. A coach may see a player dealing with personal stress, adjusting to a new country, or responding to locker room dynamics.

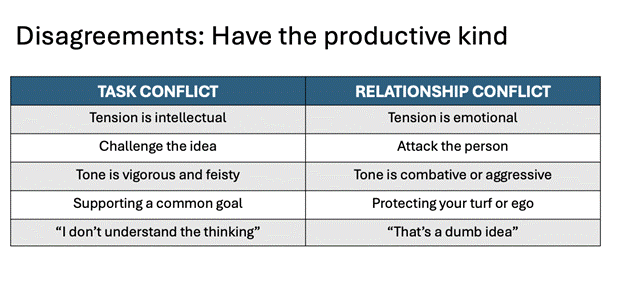

AI recognizes correlation. Humans interpret context.

Once algorithms begin influencing playing time, contract renewals, and youth selection, the concern is not always that the numbers are wrong. The concern is that nuance disappears. And nuance is where the identity lives.

There is also the matter of accountability. In traditional football hierarchies, responsibility is clear. The sporting director signs the transfer. The coach selects the lineup. The board approves the budget. When decisions become described as AI-informed, ownership may blur. If a model strongly recommends a player acquisition and it fails, who is accountable?

Executives quietly wrestle with a difficult question. Are we augmenting human decision-making, or are we insulating ourselves behind algorithms?

Football makes this conversation more complicated because it operates within an emotional economy. This is not manufacturing or logistics. It is tribal, cultural, and often irrational. Supporters do not chant for data models. They chant for heroes. They attach meaning to narrative, sacrifice, and identity.

As AI systems begin shaping recruitment pipelines and tactical frameworks, some stakeholders fear sterilization. They worry about artistry being reduced to optimization. There is unease that algorithmic convergence could standardize playing styles across leagues and continents, subtly narrowing the diversity that gives football its richness.

At the human level, adoption anxiety is real. For analysts, scouts, and coaches, AI can feel existential. If systems can automatically generate scouting reports, simulate match outcomes, forecast fatigue, and suggest tactical tweaks, where does that leave decades of experiential mastery?

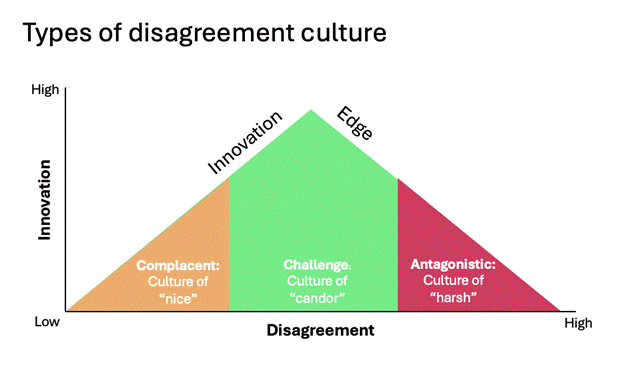

The anxiety is rarely stated openly. But it surfaces as resistance, as hindered implementation, as quiet skepticism during executive meetings.

Historically, technology replaced repetitive labor. Artificial intelligence increasingly encroaches on cognitive territory. That feels different because it questions identity rather than the task.

From a purely operational perspective, resisting AI makes little strategic sense. Competitive landscapes are tightening. Player markets are globalizing. Financial scrutiny is increasing. Ignoring intelligent systems is not a sustainable path.

Yet adoption still feels threatening, and that's the crazy part. It shouldn’t. Who wants to spend days on end labelling images and people when they could be outside training and learning in the real world? Part of that fear stems from identity disruption. Many leaders in sport built their careers on intuition refined over decades. When an algorithm disagrees with a veteran scout, it does not simply challenge a conclusion. It challenges authority, experience, and, very likely, self-worth.

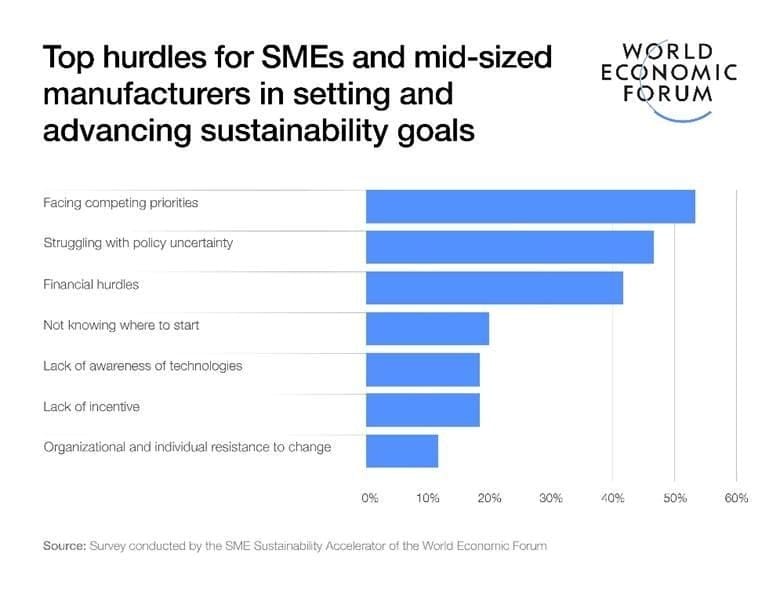

Another part originates from cultural lag. Technology adoption cycles move in quarters. Cultural adaptation unfolds over the years. Boards push for innovation to sustain competitiveness. Coaching staff require psychological safety to experiment. Youth academies rely on trust built over generations. When AI integration outpaces organizational readiness, friction is inevitable.

There is also the issue of opacity. When a neural network flags a prospect as high-potential but cannot explain its reasoning in language accessible to decision-makers, skepticism from the people it influences is entirely rational. Transparency is not a luxury in football ecosystems. It is essential, and without it, adoption can feel like surrender, so resistance becomes the rational default.

COEXISTENCE IS THE COMPETITIVE ADVANTAGE

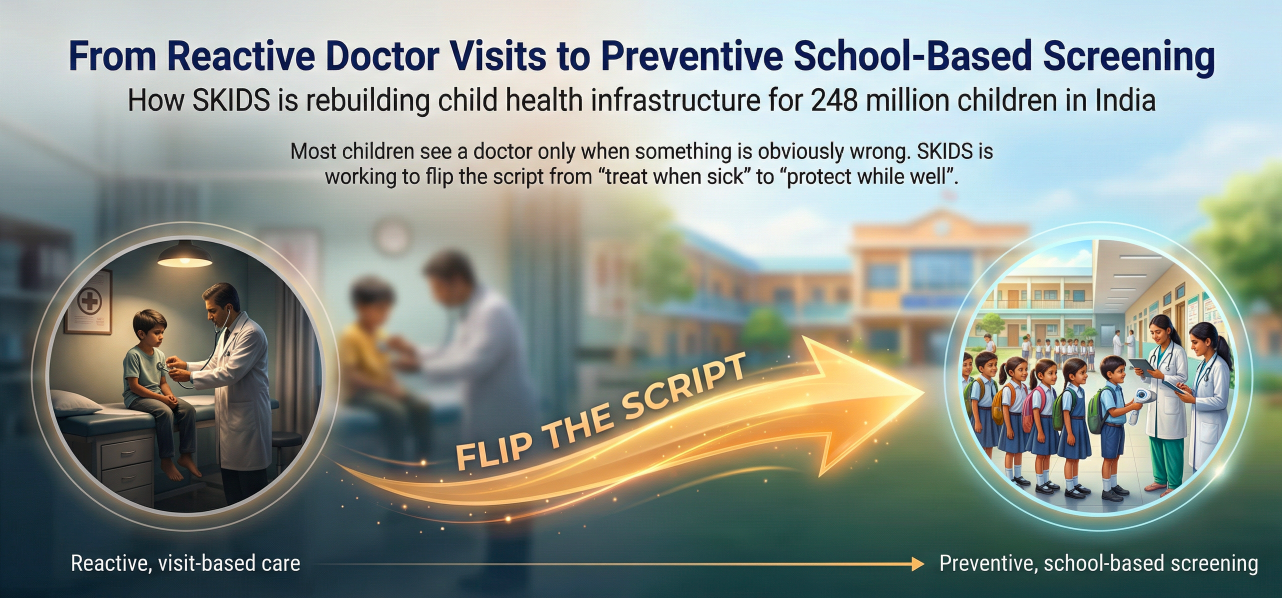

Looking forward, the future of AI in football will not be determined solely by model accuracy. It will be formed by how organizations design coexistence between machine precision and human decision-making. It is still a beautiful game with AI.

The most durable structures will be human-in-the-loop systems in which algorithms surface insights, although decisions remain explicitly owned by people. Rather than automation replacing authority, augmentation will define the next era. Clear decision rights will matter as much as predictive power.

Explainability will likely become a competitive advantage. Systems that translate model outputs into interpretable reasoning will earn trust faster across locker rooms and boardrooms alike. Ethical governance will also shift from an afterthought to a strategic differentiator. Questions about biometric tracking, youth data collection, bias mitigation, and data ownership will not go away. Organizations that address them proactively will build stronger ecosystems. They will.

Above all, it is worth remembering that football remains a human theater. AI may optimize variables, forecast probabilities, and compress analysis time. It cannot replicate collective belief, resilience under pressure, or the chemistry that turns individuals into a team.

We are not witnessing the elimination of human judgment in football. We are witnessing its current confrontation.